While deep learning techniques have become extremely popular for solving a broad range of optimization problems, methods to enforce hard constraints during optimization, particularly on deep neural networks, remain underdeveloped. Inspired by the rich literature on meshless interpolation and its extension to spectral collocation methods in scientific computing, we develop a series of approaches for enforcing hard constraints on neural fields, which we refer to as Constrained Neural Fields (CNF). The constraints can be specified as a linear operator applied to the neural field and its derivatives. We also design specific model representations and training strategies for problems where standard models may encounter difficulties, such as conditioning of the system, memory consumption, and capacity of the network when being constrained. Our approaches are demonstrated in a wide range of real-world applications. Additionally, we develop a framework that enables highly efficient model and constraint specification, which can be readily applied to any downstream task where hard constraints need to be explicitly satisfied during optimization.

Applications

Fermat's Principle

Fermat's principle states that the optical path taken by a ray between two given points is the path that can be traveled in the least time. CNF optimizes the optical trajectory of a ray in a 2D medium where the refractive index increases along the Y-axis, given the entry point P0 and exit point P1.

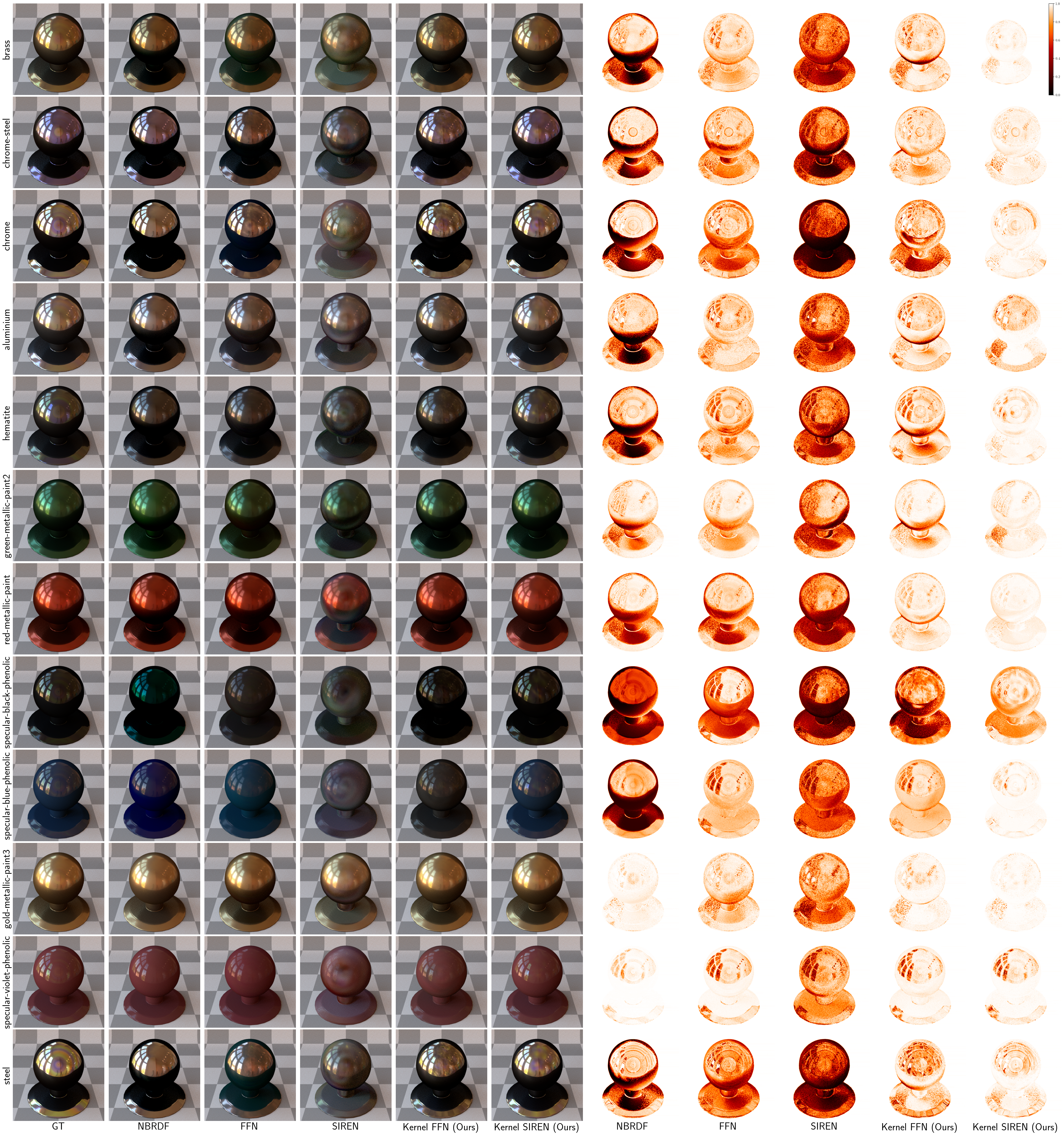

Learning Material Appearance

By sampling constraint points around the specular highlights, CNF learns the high frequency details more accurately than the other methods.

Interpolatory Shape Reconstruction

CNF can produce plausible implicit surfaces from point clouds by strictly interpolating the point sets and their normals (hard constraints), while also incorporating optimization processes (soft constraints) to ensure conformity with the Eikonal equation.

CNF can be scaled to accommodate a large number of constraints. For example, it can be used to reconstruct surfaces from point clouds consisting of 10k points.

Self-Tuning PDE Solver

CNF can automatically fine-tune the hyperparameters of a proposed skewed radial basis function to improve the performance of the Kansa method, a meshless PDE solver, when applied to irregular input grids